Capturing and validating data, part 2

Written by Jason on August 20, 2013This is the second post that discusses various ways to validate your imported data. In part 1, we discussed how content check fields help ensure a source file hasn't been changed or corrupted, and how pattern matching lets you discard elements that don't meet a predefined pattern.

In this part, we'll cover data extraction, which allows you to validate and capture a subset of an element, e.g., the last word of a title on a web page.

We'll also look at data approval, which lets a dataset's owner approve or reject changes from other write-authorized users or automated imports before being applied to the dataset. This functionality can be used as part of a formal data submission/approval process or simply be used to put an extra set of human eyes on a critical dataset.

Let's start with data extraction. Basically, you'll select an individual element (in cell capture mode) or a column (in table capture mode) and use a regular expression to extract a portion of those contents and verify them.

We'll use the same US Energy Information Administration (EIA) weekly gas price table for our sample as we did in the prior post, which can be found on their website at:

http://www.eia.gov/petroleum/gasdiesel/

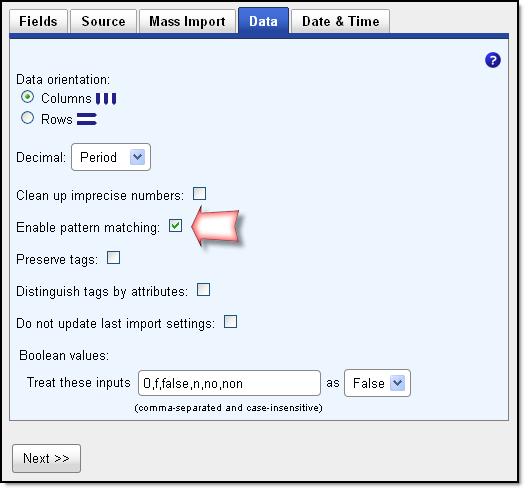

We'll import this HTML source as a table using the above URL. As before, we'll enable pattern matching in import step #2 so we can extract our region identifier.

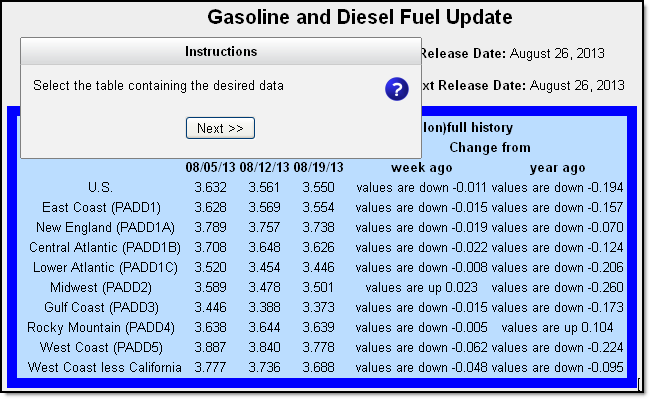

Continuing with the import process, we'll select the data table shown below and click Next.

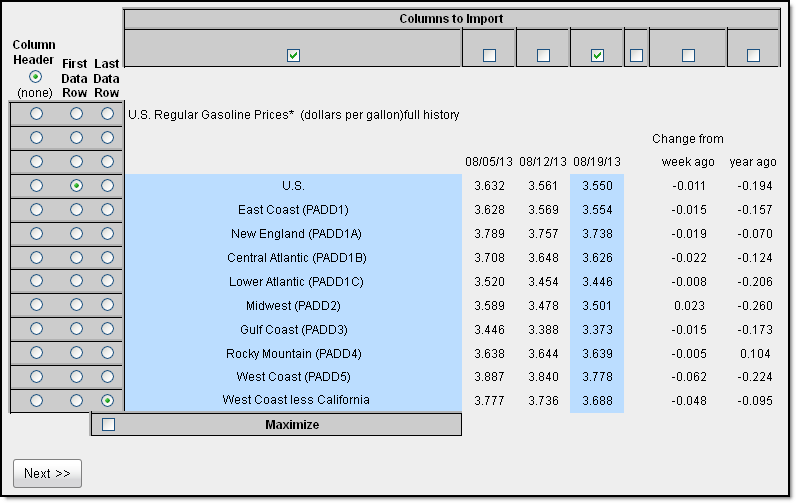

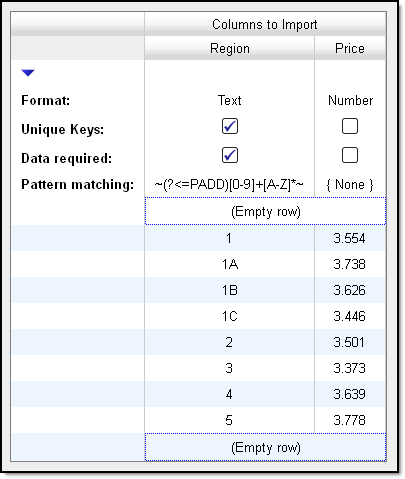

Below, you'll see we've already specified the table to capture and have selected our rows and columns.

We used pattern matching in the prior post and only kept the rows that contained the text PADD in the region field. This time, we'll do the same thing, but we're only going to keep the actual region identifier (1, 1A, 1B, etc.) and strip off the rest of the text.

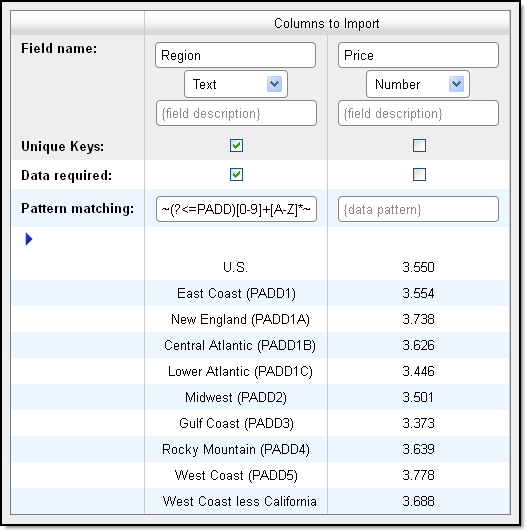

We'll name our fields, confirm that the data is stored in the correct format, and use a regular expression to extract and validate the part of the region field that we wish to keep.

Regular expressions (regex) allow you to isolate and extract a precise portion of text. They are quite powerful, and can seem a little intimidating at first, but they aren't as bad as you might think at first glance. This is the expression we'll use:

~(?<=PADD)[0-9]+[A-Z]*~

The tildes (~) enclosing the expression tell Tuhunga that this is a regex and the (?<=PADD) portion is looking for the characters PADD "behind" the text we want to keep (it's called a lookback). The [0-9]+ portion will extract one or more characters that immediately follow PADD in the range of 0 through 9 and the [A-Z]* portion will extract zero or more characters in the range A-Z (uppercase only).

Please refer to our regex article for more information.

Let's take a look at the next step once the regex has been applied:

As expected, only the PADD-containing rows were kept and only the extracted code remains, while the empty row placeholders indicate where data was discarded. As you can see, data extraction is a very powerful tool that not only lets you capture precisely what you want, but also to ensure that it meets the parameters you set. Let's move on to the next validation method.

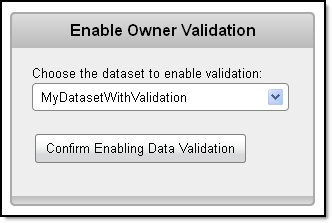

Owner validation is quite straightforward. All the dataset's owner needs to do is select the "Enable Validation" option under the Manage Data menu and choose the desired dataset, as shown below.

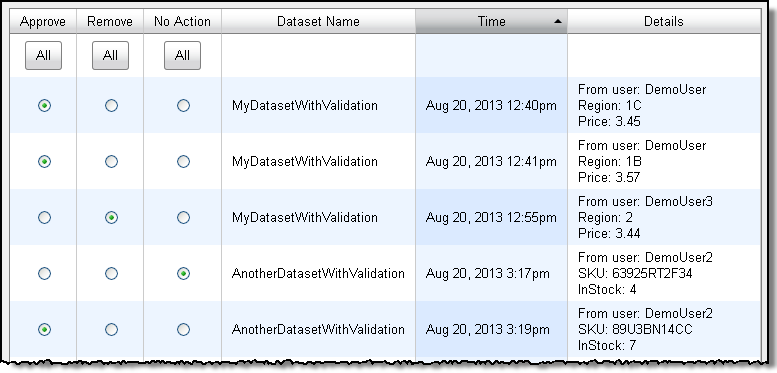

When a user imports into the dataset, the changes are queued up in the owner's account for review. The owner can see the proposed changes and accept or reject any or all of them.

If you're interested in even greater flexibility or control in validating incoming data, please get in touch - we have additional features available for enterprise-level users.