Commodity prices and candlestick charts

Written by Jason on September 6, 2011We'd like to highlight a recently arrived dataset of commodity prices dating back to the 60s. Not only does it contain the usual suspects like the energy and metals complexes, but some less commonly seen items like chicken, fertilizer components, and plywood. This dataset is available at no additional charge to all users.

We're also going to show you a relatively new addition to our visualization capabilities by demonstrating the candlestick chart by using this dataset. We'll examine the ratio of coal and LNG prices, and normalize for their heating capacity. While not exactly the cost that a Japanese generator would face (the prices lack transport costs, and no credit is given for power plant efficiency differences to name two), it seems like an interesting metric to examine over time.

As is our custom, read on to see how we created this chart.

Keep reading...Tags: analysis, charts, datasets, examples

Illustrating the aggregation functions

Written by Jason on July 20, 2011A common question is how to use (and think about) the aggregation functions. While we have a comprehensive reference guide, we thought it would be useful to illustrate them graphically, especially since they aren't single-step functions in a spreadsheet.

We're using the same data for each of these charts - you may have seen it before in an earlier post on natural gas pricing in various currencies. We'll use the minimum version of each function set for consistency, but you can also find maximums, means, sums, counts, standard deviations and variances.

We'll begin with the all_ and cond_ series. First, we'll show the chart, and then explain how each function works.

Looking at the legend in the top-left, the first (green) line is the monthly price of US city gate natural gas, from 2000 through April 2011.

The second (blue) line is the minimum price found over the entire time period, and was calculated with the formula shown in the legend.

The third (red) line is the minimum price found in the results where the year is 2005. This formula finds all of the results where the year of the Date field is 2005, and then returns the minimum value of NGCitygatePrice.

The fourth (dark yellow) line is the minimum price found in the results where the year is 1998. However, since there are no results earlier than 2000 (as determined by our filter), there are no values that satisfy the cond_min criteria, and the yellow line is at the zero mark.

The fifth (purple) line is the minimum price found in the entire dataset where the year is 1998 (note the 1 as the second parameter, as opposed to 0 in the prior yellow line).

Note that both of the all_ and cond_ functions return the same value for all rows in the result set. This is not the case for the rollup_ series.

As before, the first (green) line is the monthly price of US city gate natural gas.

The other (purple) line is the minimum price for each individual year and displayed over the entire time period. We find it useful to think of this function by rolling up the data by the consolidation fields (the year(Date) portion of the legend), and then applying the operation to each consolidated bucket.

Keep reading to see the back_ and forward_ function series demonstrated in chart form.

Keep reading...Tags: analysis, charts, examples

Introducing mass capture

Written by Jason on July 15, 2011Now import data when you don't know what it is or where it will be stored!

Well, it's not a new feature in the strictest sense - in fact, it's been available since February - but this is the first time we're mentioning it on the blog.

What it can do for you:

- get file(s) that are periodically updated, but with new names/URLs

- get data based on data from another source, e.g.:

- import data from multiple web pages based upon a list that you have access to in Google Docs

- use a "top searched" list from one website to get data from another site

- capture data from a file where the name changes daily or weekly

- get data from many individual sources while only needing to configure a single import

- and lots more that we haven't mentioned here, or even thought of!

How does it work? Well, we'll pull a few screenshots from Tutorial 6, Part II, which offers a detailed walkthrough to get you started. In addition, the help pages that are available at each step of the way will guide you through all the flexibility this feature offers. Let's run through an example.

Keep reading...Tags: examples, features, imports

US natural gas priced in yuan?? How to combine datasets in a single analysis

Written by Jason on May 18, 2011This will be the first blog post that deals with linking multiple distinct datasets together (though the tutorials and reference guide cover this topic extensively). For this example, we'll show you how to link foreign exchange rates with energy prices to calculate the price of natural gas that the US consumes in Canadian dollars (or roubles, yen, euros, ringgit, or one of dozens of other possibilities).

Conceptually, linking is similar to the vlookup function found in Excel, where you have a field in your primary dataset, and you want to use a field in a separate dataset that can be related to the first dataset by one or more fields. In our case, we have a range of natural gas prices (in US dollars) at different dates, and want to know what the gas prices would have been at each date in the foreign currency.

What we're doing is getting the spot price of natural gas for each month, and multiplying that price by the exchange rate for the month. We keep the price and exchange rate consistent by linking them together on the date field in each dataset.

This is easy to accomplish - linking the two groups together is done in seconds, and pulling the chart together takes a couple of minutes. Keep reading to see how it's done.

Keep reading...Tags: analysis, charts, datasets, examples

Introducing data extraction

Written by Jason on February 22, 2011In the last few months, we’ve been rolling out new features, and over the next little while, we’ll highlight some of them in the blog. Today, we’ll highlight data extraction using regular expressions.

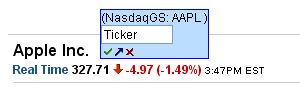

With this feature, you can capture the exact data you want, even if it’s not cleanly delimited in the source. In the example below, we want to capture the ticker, but it’s not a clean capture as it's surrounded by parentheses.

Without using a data extractor, the item would be stored as:

(NasdaqGS: AAPL)

However, if we use a regular expression to isolate the ticker, we’re able to capture only what we want:

AAPL

This feature is extremely useful in cleansing input before storage. We'll look at the extractor we used (it’s easier than it looks - we promise) as well as provide some useful links that explain how to build them.

Keep reading...Two new import options

Written by Jason on November 30, 2010Just a quick note for today. We’ve just added two new file format options to our roster of ways to import data: JSON, an often hidden, but commonly used standard for data exchange between various applications on the Internet, and spreadsheets from the online Google Docs suite.

As with the other seven supported file formats, when the data is located online (as is always the case with Google Docs), it can be imported automatically without any human intervention if the user wishes.

We hope that these two new options will make it easier to manage your data. We’ve got other import formats planned, so if you have a specific need that you’d like us to prioritize, please drop us a line.